Advanced Quantum Kernel Methods

Exploring non-linear feature mapping through quantum Hilbert spaces — the mathematics behind quantum advantage in kernel machines

Introduction

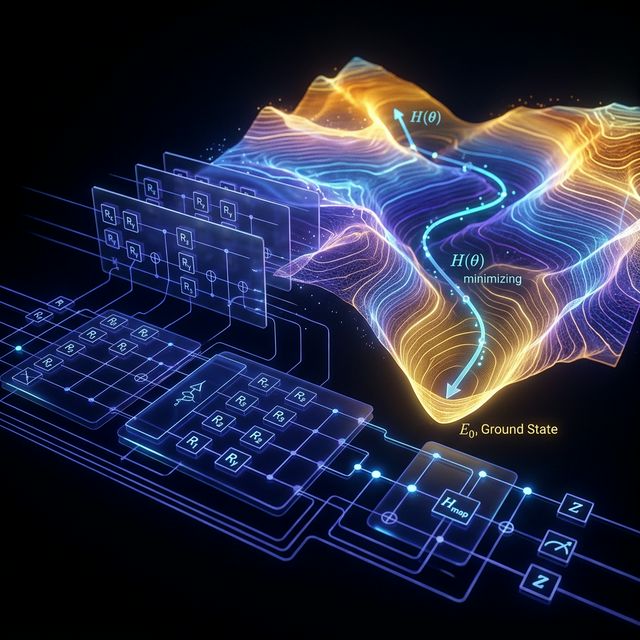

Quantum kernel methods represent one of the most theoretically grounded approaches to quantum machine learning. Unlike variational quantum circuits, which suffer from barren plateaus and trainability issues (see our article on QNN convergence), kernel methods shift the quantum computation to a fixed subroutine — the kernel evaluation — while the learning algorithm remains entirely classical.

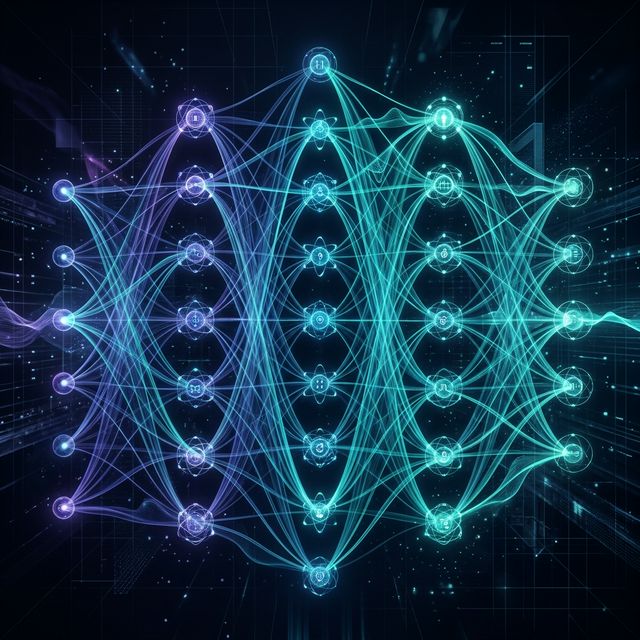

The key idea: use a quantum circuit as a feature map that embeds classical data into an exponentially large Hilbert space, then compute inner products in that space as a kernel function for classical algorithms like Support Vector Machines.

This article derives the full mathematical framework from scratch.

1. Classical Kernel Methods: The Foundation

Before introducing quantum kernels, we need to be precise about what a kernel is.

1.1 The Kernel Trick

Given a dataset with and , a kernel function is a symmetric positive semi-definite function:

where is a feature map into a Hilbert space (which may be infinite-dimensional), and is the inner product in .

By Mercer’s theorem, any continuous positive semi-definite function on a compact domain is a valid kernel corresponding to some feature map. This means we never need to explicitly compute — only the pairwise inner products .

1.2 The SVM Primal Problem

The hard-margin SVM finds the maximum-margin hyperplane in :

1.3 The Dual Problem

By the KKT conditions, the dual problem depends only on inner products:

subject to . The decision function is:

This is entirely computable from the kernel matrix without ever explicitly constructing . This is the kernel trick.

2. Quantum Feature Maps

2.1 Definition

A quantum feature map maps classical data to a density matrix in the space of bounded operators on an -qubit Hilbert space :

where is a parameterized unitary circuit that depends on the data point .

The quantum state lives in a Hilbert space of dimension — exponentially large in the number of qubits.

2.2 The Quantum Kernel Function

The quantum kernel is defined as the squared overlap (fidelity) between two quantum feature states:

This is exactly the probability of measuring the all-zeros bitstring after running the circuit on the zero state.

This is directly measurable on quantum hardware. No classical post-processing of exponentially large vectors is required — the kernel value is a measurement probability.

2.3 Positive Semi-Definiteness

The quantum kernel is a valid kernel function. Proof: The kernel matrix with entries is positive semi-definite because:

where is the Hilbert-Schmidt inner product. This is an inner product on the space of density matrices, so is positive semi-definite by construction.

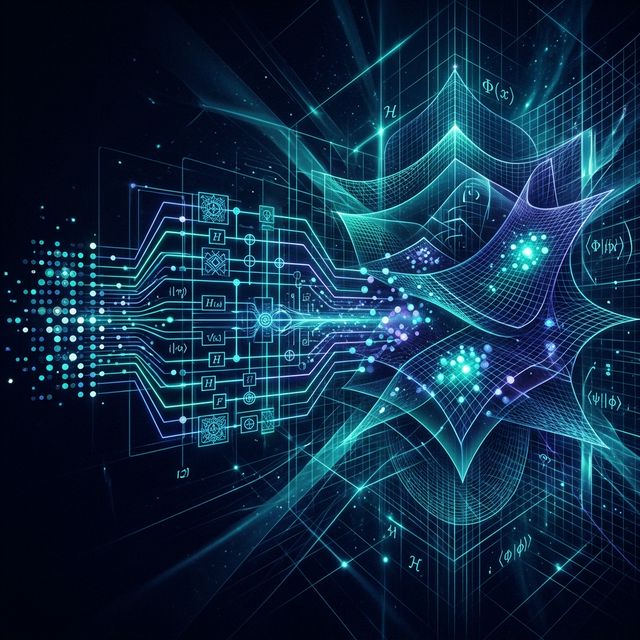

3. The IQP Feature Map (Havlíček et al., 2019)

The landmark paper by Havlíček et al. [2019, Nature] introduced a specific quantum feature map based on Instantaneous Quantum Polynomial (IQP) circuits and argued that the corresponding kernel is classically hard to estimate.

3.1 Circuit Construction

For qubits and input , the feature map circuit is:

The circuit applies two rounds of Hadamard gates interleaved with diagonal unitaries. The phase functions are:

where in practice only nearest-neighbor and next-nearest-neighbor pairs are included to keep the circuit implementable on hardware with limited connectivity.

3.2 Classical Hardness Argument

Havlíček et al. argue (with caveats) that classically simulating this kernel efficiently would imply a collapse of the polynomial hierarchy. Specifically, estimating to within additive error in polynomial time classically would require solving problems in efficiently, which is believed impossible.

Important caveat: This hardness is for worst-case inputs, not necessarily the data distributions encountered in practice. Whether this hardness translates to practical learning advantage remains an open and actively debated question [Schuld & Killoran, 2022; Huang et al., 2021].

4. The Kernel Matrix and Quantum Advantage

4.1 Computing the Full Kernel Matrix

For a training set of examples, computing the full kernel matrix requires quantum circuit evaluations. Each evaluation runs the circuit and measures in the computational basis, with the kernel value estimated as the frequency of the outcome over shots:

Statistical error in this estimate is by the central limit theorem. For -accurate kernel estimation, we need shots per entry.

4.2 When Can Quantum Kernels Win?

Huang et al. [2021, Nature Communications] proved a rigorous separation result: there exist learning problems for which:

for any classical kernel in a fixed class, given training examples. The constructed problem involves learning properties of quantum processes — not a natural classical ML problem, but a genuine provable advantage.

For classical data distributions, the picture is murkier. The quantum kernel must access features that are hard to compute classically for a genuine advantage. If the relevant features are easy to compute classically, then a classical kernel can match quantum performance [Schuld & Killoran, 2022, Physical Review Letters].

4.3 The Geometric Difference

Liu et al. [2021, Nature Physics] quantified the relationship between classical and quantum kernels via the geometric difference:

where and are the quantum and classical kernel matrices. When is large, the quantum kernel captures features that the classical kernel misses. Quantum advantage in generalization scales with .

5. Practical Considerations

5.1 Kernel Alignment

Not all quantum feature maps are useful. A feature map is well-suited to a task when the corresponding kernel has high target alignment:

where is the label vector and is the Frobenius inner product. High alignment means the kernel matrix naturally clusters data by class label. One can use this as a guide to design or select quantum feature maps before training.

5.2 Number of Qubits vs. Feature Dimension

For qubits, the feature space dimension is . This means:

| Qubits () | Feature space dim. | Classical equivalent |

|---|---|---|

| 10 | 1,024 | Feasible classically |

| 20 | 1,048,576 | Hard but not impossible |

| 50 | Far beyond classical | |

| 100 | Classically intractable |

However, not all dimensions are necessarily useful for a given learning problem. The intrinsic dimensionality of the learning problem may be much lower.

5.3 Shot Noise and Sample Complexity

A significant practical challenge: to estimate a single kernel entry to accuracy , we need shots. For a training set of examples, the full kernel matrix has unique entries. At shots per entry, this requires circuit executions. On current hardware running at circuits/second, this takes roughly seconds — far from practical.

This is a genuine bottleneck that current research is working to address via kernel approximation methods and more efficient circuit designs.

6. Current Status and Open Questions

The field of quantum kernels is active and honest about its limitations:

Established:

- Quantum kernels are valid kernel functions with quantum circuit estimation procedures [Havlíček et al., 2019]

- Provable learning advantage exists for specifically constructed quantum tasks [Huang et al., 2021]

- Kernel alignment provides a trainable way to optimize feature maps [Hubregtsen et al., 2022, Quantum Machine Intelligence]

Open:

- Whether quantum kernels provide advantage on practically relevant classical datasets

- How to design feature maps that balance expressibility with circuit depth constraints

- Efficient estimation methods that reduce the shot requirement

Conclusion

Quantum kernel methods are one of the most mathematically rigorous approaches in quantum ML. They avoid the barren plateau problem entirely and connect directly to well-understood classical learning theory. The quantum speedup, where it exists, comes from the hardness of classically simulating the quantum feature map.

The honest assessment: they are the right way to think about near-term quantum ML for classification tasks, but practical advantage on real classical datasets has not yet been demonstrated. That is the frontier. The mathematics is correct; the engineering challenge remains.

References

-

Havlíček, V., Córcoles, A. D., Temme, K., Harrow, A. W., Kandala, A., Chow, J. M., & Gambetta, J. M. (2019). Supervised learning with quantum-enhanced feature spaces. Nature, 567(7747), 209–212. https://doi.org/10.1038/s41586-019-0980-2

-

Huang, H.-Y., Broughton, M., Mohseni, M., Babbush, R., Boixo, S., Neven, H., & McClean, J. R. (2021). Power of data in quantum machine learning. Nature Communications, 12(1), 2631. https://doi.org/10.1038/s41467-021-22539-9

-

Schuld, M., & Killoran, N. (2022). Is quantum advantage the right goal for quantum machine learning? PRX Quantum, 3(3), 030101. https://doi.org/10.1103/PRXQuantum.3.030101

-

Liu, Y., Arunachalam, S., & Temme, K. (2021). A rigorous and robust quantum speed-up in supervised machine learning. Nature Physics, 17(9), 1013–1017. https://doi.org/10.1038/s41567-021-01287-z

-

Hubregtsen, T., Wierichs, D., Gil-Fuster, E., Derks, P.-J. H. S., Faehrmann, P. K., & Meyer, J. J. (2022). Training quantum embedding kernels on near-term quantum computers. Quantum Machine Intelligence, 4(1), 6. https://doi.org/10.1007/s42484-022-00060-6

-

Schuld, M. (2021). Supervised quantum machine learning models are kernel methods. arXiv preprint arXiv:2101.11020. https://arxiv.org/abs/2101.11020

Rashan is a Data Science Professional and Quantum AI Researcher, and the Founder & CEO of Intellit — an AI automation agency building intelligent systems across fintech, banking, and enterprise sectors.

The Quantum Intelligence Digest

Join researchers and engineers who read Quaniq.